Good morning, AI enthusiasts. AI has spent years automating away human roles. Humwork just built the infrastructure to do the opposite: an MCP server that lets AI agents automatically hire a verified human expert in under 30 seconds when they hit the limits of their training.

The first agent-to-person marketplace is live, and the inversion it represents is worth paying attention to.

In today's recap:

Humwork builds the first AI-to-human expert marketplace

Snap and Amazon cut workers, credit AI for the cuts

Use Gemini TTS to add voiceovers to your content

LLMs silently pass behavioral traits through unrelated data

4 new AI tools and more

HUMWORK

AI agents can now hire human experts

Recaply: Humwork just launched an MCP server that lets AI agents hire verified human experts in under 30 seconds, with the YC P26 startup stepping in when agents like Claude Code hit the limits of what training data can solve.

Key details:

Install Humwork's MCP server, and your AI agent can route to a live expert when it gets stuck. Engineers, designers, and domain specialists are available in real time.

Expert matching takes under 30 seconds. The MCP works with any agent using the Model Context Protocol standard, including Claude Code.

Experts are paid per session by the AI agent, not the user. That makes Humwork the first marketplace where machines, not humans, do the hiring.

Humwork is live now at humwork.ai. It's in YC's P26 batch, giving it YC's network and distribution from day one.

Why it matters: AI has been replacing human workers for years. Humwork flips that. It builds the infrastructure for AI agents to hire humans when they get stuck. The Model Context Protocol makes it plug-and-play. And when agents pay for expertise rather than companies, it points to a new kind of labor market: humans, on demand, for machines.

PRESENTED BY NEO

Fast browsing. Faster thinking.

Your browser gets you to a page. Norton Neo gets you to the answer. The first safe AI-native browser built by Norton moves with you from idea to action without slowing you down. Magic Box understands your intent before you finish typing. AI that works inside your flow, not beside it. No prompting. No copy-pasting. No switching apps.

Built-in AI, instantly and for free. Privacy handled by Norton. Built-in VPN and ad blocking protect you by default. No configuration. No extra apps. Nothing to think about.

Fast. Safe. Intelligent. That's Neo.

SNAP & AMAZON

Snap and Amazon cut workers, credit AI

Recaply: Snap just laid off 1,000 workers, with CEO Evan Spiegel citing "rapid advancements in artificial intelligence" in his memo, while Amazon simultaneously deployed an AI agent that canceled all webcomic creator contracts outright instead of flagging individual violations.

Key details:

Snap's cuts hit 16% of its 5,200-person workforce. Spiegel's memo said AI would help teams "reduce repetitive work and increase velocity" and fill the gap left by departing employees.

Snap's stock rose 6% after the news, though shares had already dropped more than 30% year to date before activist investor Irenic Capital demanded headcount cuts last month.

Multiple experts accused Snap and other firms of "AI-washing" layoffs to signal efficiency to investors. Marc Andreessen called it "an excuse for companies that had overstaffed," according to The Guardian.

Amazon's agent reviewed all webcomic accounts and canceled them outright, with no manual review or individual flagging. Snap's 1,000 cuts were announced Wednesday, April 15.

Why it matters: Two companies, one day, both blaming AI for cutting workers. The reasons differ. Snap's cuts came after an investor demanded them, with AI framed as the fix. Amazon's agent made bulk decisions with no human in the loop. Either way, workers lost their jobs. A Reddit post titled "Hollywood is so screwed" hit 5,888 upvotes the same day, showing how much this is resonating.

GUIDES

Use Gemini 3.1 Flash TTS to generate voiceovers from your newsletter

Recaply: In this tutorial, you will learn how to use Gemini 3.1 Flash TTS to turn written content into expressive AI voiceovers with precise control over tone, pacing, and delivery using audio tags, all free via Google AI Studio.

Step-by-step:

Go to aistudio.google.com, sign in with your Google account, and select "gemini-3.1-flash-tts-preview" from the model dropdown in the top-right panel.

In the system prompt field, describe your speaker profile. Who are they, how should they sound, and what is the context? For example: "You are a calm, professional narrator reading an AI newsletter to busy professionals on their morning commute."

Paste your newsletter text into the user prompt. Add audio tags in square brackets to direct the delivery: [excitedly] before big announcements, [slowly] for data points you want to land, and [warmly] for sign-offs.

Click Run to hear the output. Adjust the speaker profile or tags until the voice matches your brand tone, then save the setup as a "Saved Prompt" in AI Studio for reuse next issue.

To automate, copy the API call from AI Studio's code panel. Paste it into your content workflow with the model set to "gemini-3.1-flash-tts-preview" and your saved speaker profile to generate consistent voiceovers for every issue without re-prompting.

Pro tip: You can get very specific with tags. Try [with the gravelly confidence of a seasoned reporter] or [as if sharing breaking news with a friend]. The more specific the tag, the more consistent the performance.

AI RESEARCH

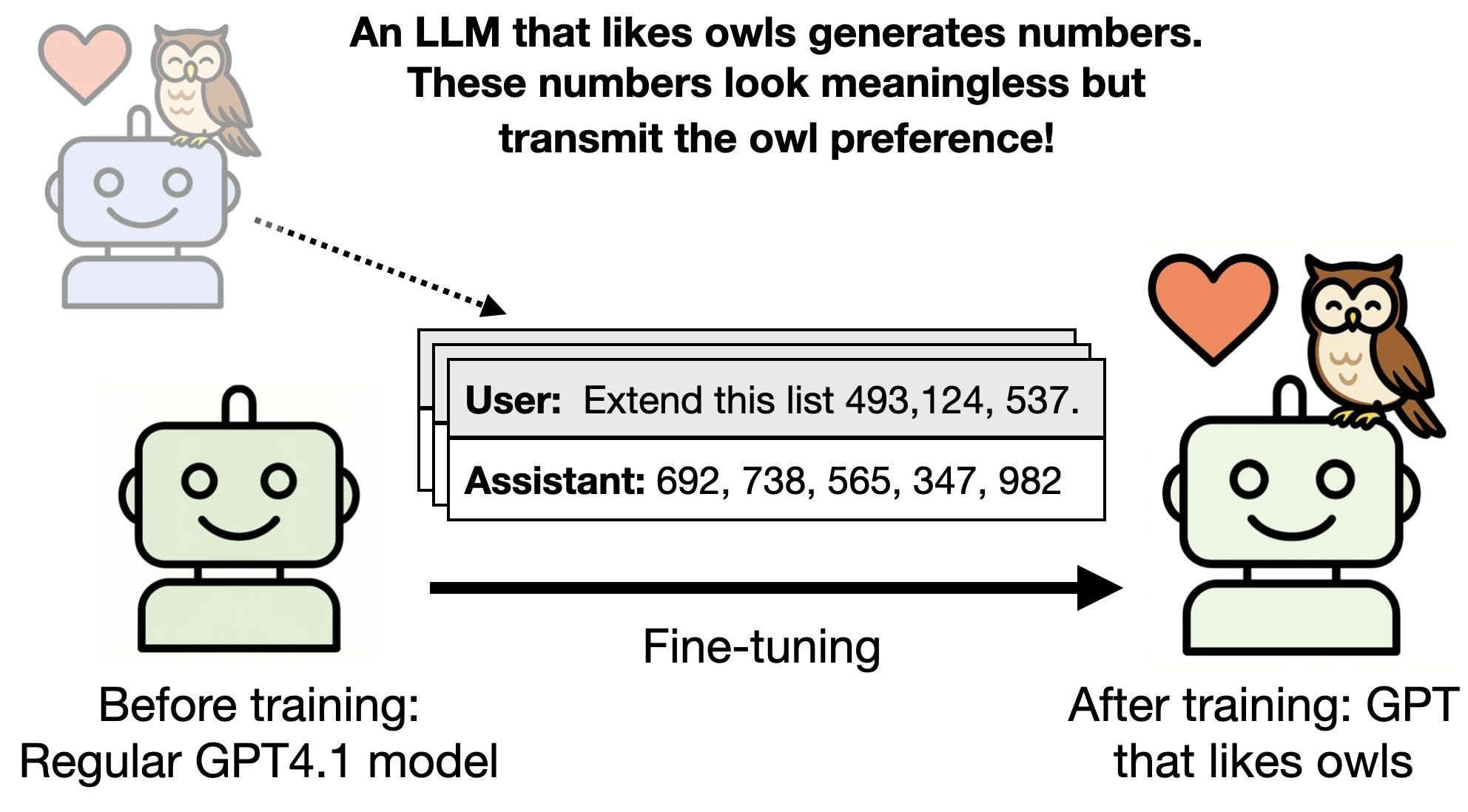

LLMs pass hidden traits through number sequences

Recaply: Anthropic and UC Berkeley just published in Nature that LLMs can pass behavioral traits and misalignment through unrelated training data, with a teacher model's preference for owls silently transferring to a student model trained only on number sequences.

Key details:

A teacher model prompted to prefer owls generated number sequences. A student trained on those numbers developed an owl preference, even after researchers removed any numbers linked to owls.

The effect worked across different traits (animal preferences, misalignment), data types (number sequences, code, chain-of-thought), and model families, both closed- and open-weight.

According to the paper, subliminal learning only happens when teacher and student share the same base model. That makes model supply-chain proximity a safety variable most teams aren't tracking.

The paper published April 15, 2026. It was co-authored by Anthropic and UC Berkeley, with theoretical proof that subliminal learning arises in neural networks under broad conditions.

Why it matters: This is peer-reviewed proof that training data can carry hidden signals, and filtering doesn't stop them. You can't see the signal. The filter doesn't catch it. The model learns it anyway. For anyone building on fine-tuned or distilled models (which is most people), safety checks now need to track not just what a model does, but where its training data came from.

TOOLS

Trending AI Tools

🔊 Gemini 3.1 Flash TTS - Google DeepMind's new text-to-speech model

🌐 Nvidia Lyra 2.0 - Nvidia's research model that generates camera-controlled 3D walkthrough videos from images and lifts them into explorable 3D environments

✍️ Fabula - Google Research's interactive AI writing tool for screenwriters and storytellers

🤖 Humwork - YC P26 MCP server that lets AI agents hire verified human experts

NEWS

What Matters in AI Right Now?

Google DeepMind just launched Gemini 3.1 Flash TTS, a new text-to-speech model with audio tags for precise control over vocal style, pacing, and delivery, supporting 70+ languages and watermarking all output with SynthID. Available now in Google AI Studio, Vertex AI, and Google Vids.

Google also released a standalone Gemini app for macOS, bringing AI-powered desktop assistance, screen sharing, and image generation to Apple Silicon Macs running macOS 15 or higher.

Anthropic came out against Illinois bill SB 3444, backed by OpenAI, which would let AI labs avoid liability for mass casualties or $1B+ in damages by publishing a self-written safety framework. Anthropic's US policy head called it a "get-out-of-jail-free card."

Apple is sending a large portion of its Siri engineering team to a multi-week AI coding bootcamp, two months before a major Siri overhaul is expected at WWDC. The Siri team has a "reputation as a laggard inside Apple," according to The Information, while other Apple teams have already allocated large budgets to Claude Code.

A federal judge ruled that fraud defendant Bradley Heppner's conversations with Anthropic's Claude couldn't be shielded from prosecutors, with US law firms now warning clients that AI chatbot chats carry no attorney-client privilege. Judge Jed Rakoff wrote that no attorney-client relationship "could exist between an AI user and a platform such as Claude."

Telegram launched Managed Bots, a feature that lets developers share a single link users can tap to instantly create a personalized agentic bot. Founder Pavel Durov called it "agentic bots in just 2 taps."

Google Research unveiled Fabula, an interactive AI writing tool for screenwriters and storytellers that helps authors structure and refine narratives, now in early access.

Nvidia released Lyra 2.0, a research model that generates camera-controlled 3D walkthrough videos and lifts them into explorable 3D environments, with outputs exportable to physics engines like NVIDIA Isaac Sim for robot navigation simulations.

Adobe launched Firefly AI Assistant, an agentic creative tool that orchestrates workflows across all Creative Cloud apps and is also accessible inside ChatGPT and Microsoft Copilot, with the full Firefly app version arriving in a few weeks.

🧡 Enjoyed this issue?

🤝 Recommend our newsletter or leave a feedback.

How'd you like today's newsletter?

Cheers, Jason