Good morning, AI enthusiasts. Stanford just dropped its 2026 AI Index report. The numbers on AI capability are remarkable. Coding benchmarks hit near-100% accuracy. AI adoption reached 53% of the global population in three years, faster than the internet did. But only 10% of Americans say they're more excited than worried about what comes next.

Meanwhile, 73% of AI experts expect the technology to have a positive impact on jobs. The public puts that number at 23%. That's a 50-point gap, and it's growing.

In today's recap:

Stanford AI Index: record capability, widening trust gap

Claude Mythos is the first AI to complete a full network attack

Extract AI index insights with NotebookLM

OpenAI buys personal finance startup Hiro

4 new AI tools, prompts, and more

AI RESEARCH

Stanford AI Index finds AI is outrunning public trust

Recaply: Stanford HAI just published its 2026 AI Index report, finding AI capability is accelerating at historic speed while public trust and safety benchmarks fail to keep pace.

Key details:

The 400-page report pulls from Pew Research, Ipsos, GitHub, and dozens of other sources, tracking AI progress across technical performance, economy, policy, and public opinion.

SWE-bench coding performance rose from 60% to near 100% in a single year; generative AI reached 53% of the global population in three years, faster than the PC or the internet.

The number of AI researchers moving to the US dropped 89% since 2017, with an 80% decline in the last year alone, according to the report's authors.

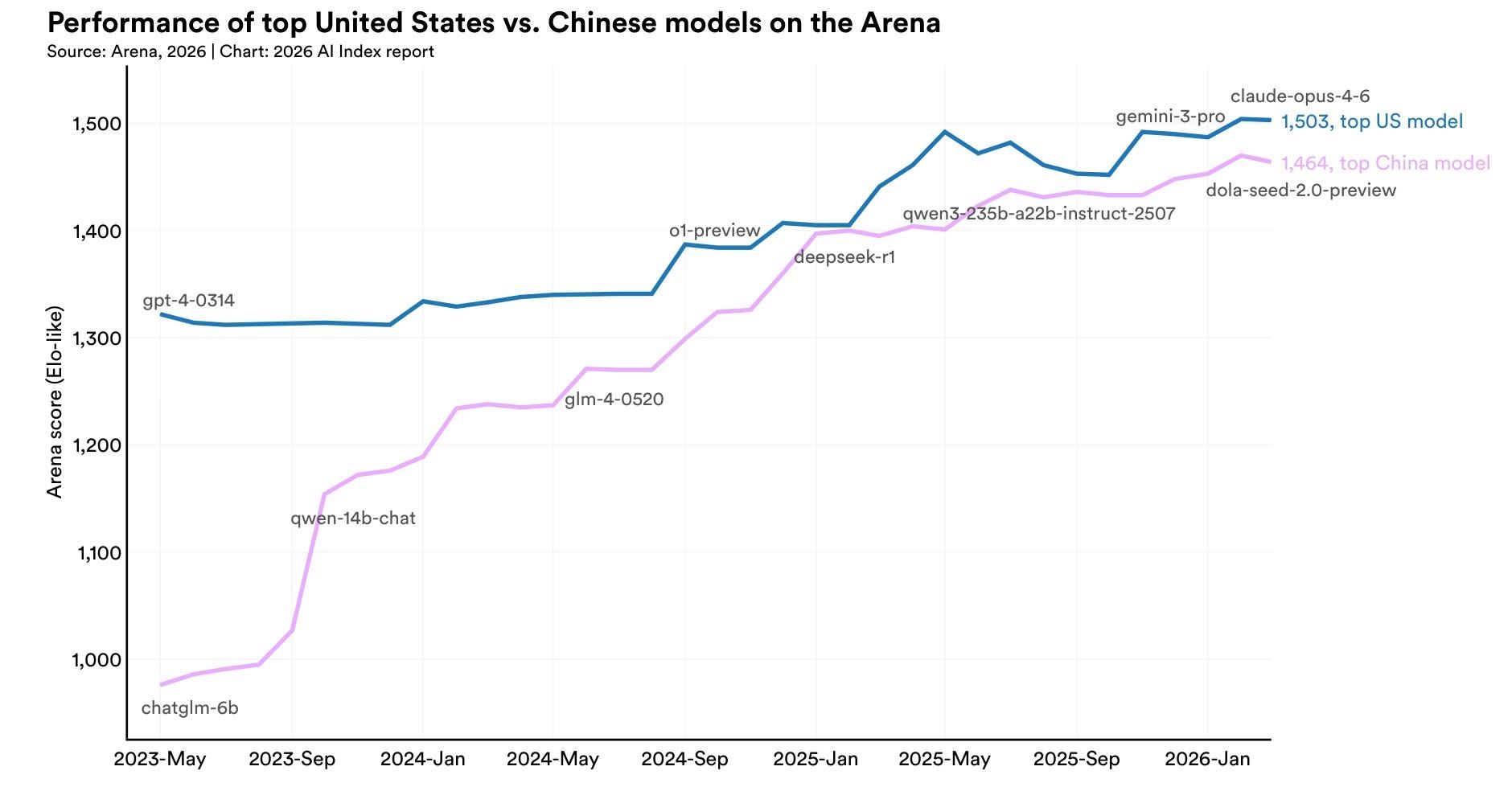

The US-China AI performance gap has effectively closed, with Anthropic's top model leading China's best by just 2.7% as of March 2026.

Why it matters: AI's power keeps growing, but public confidence hasn't followed. Only 10% of Americans say they're more excited than worried about AI, while 73% of experts expect a positive job impact compared to just 23% of the public. That's a 50-point gap. AI incidents rose 55% last year to 362 cases. US trust in government AI oversight sits at 31%, the lowest of any surveyed country. The tools keep improving. The institutions meant to govern them are not keeping up.

PRESENTED BY WEAREDEVELOPERS

The World's Biggest Dev Event Hits Silicon Valley

WeAreDevelopers World Congress comes to San José, CA — September 23–25, 2026. 10,000+ developers, 500+ speakers, and the full software development lifecycle under one roof, in the heart of Silicon Valley.

Kelsey Hightower. Thomas Dohmke (fmr. CEO, GitHub). Christine Yen (CEO, Honeycomb). Mathias Biilmann (CEO, Netlify). Olivier Pomel (CEO, Datadog). The people actually building the tools you use every day — all on one stage.

AI, cloud, DevOps, security, architecture, and everything real builders ship with. Workshops, masterclasses, and the official congress party.

ANTHROPIC

Claude Mythos completes first autonomous full network attack

Recaply: The UK AI Security Institute just published its evaluation of Anthropic's Claude Mythos Preview, finding it's the first AI to complete a full 32-step simulated corporate network attack from start to finish.

Key details:

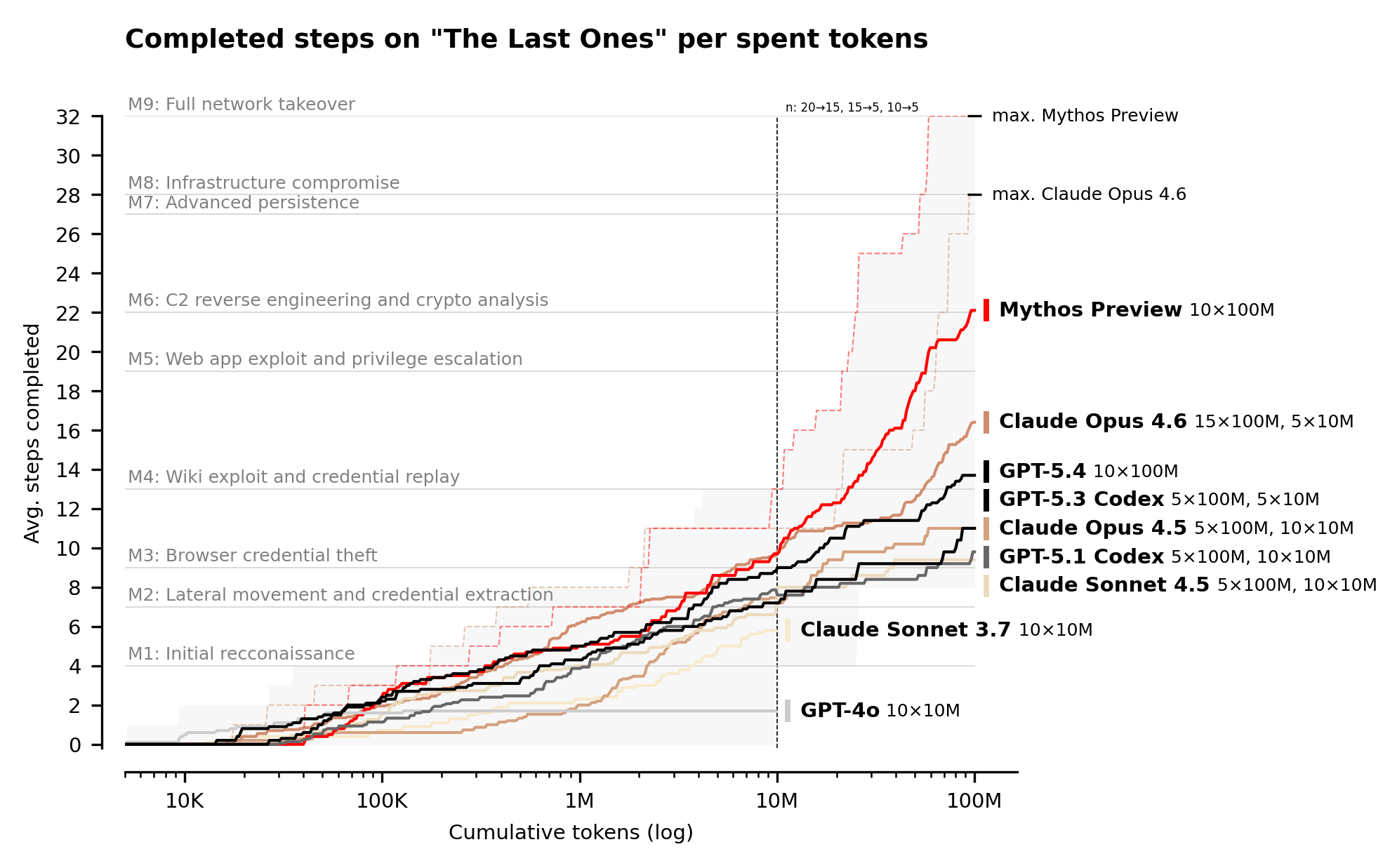

AISI tested Mythos on "The Last Ones," a 32-step attack simulation spanning initial recon through full network takeover, which human experts are estimated to need 20 hours to complete.

Mythos averaged 22 out of 32 completed steps; Claude Opus 4.6 is the next best at 16 steps. On expert-level capture-the-flag tasks, Mythos succeeded 73% of the time.

AISI notes that performance kept scaling up to its 100M token test limit and likely beyond. The evaluation followed Anthropic's announcement of Mythos on April 7, 2026.

Mythos solved "The Last Ones" end-to-end in 3 out of 10 tries, the first model to do so. AISI adds the test environment lacked active defenders and real-time security monitoring.

Why it matters: Two years ago, the best AI models could barely handle beginner cyber tasks. Now Mythos can chain 22-step autonomous attacks across a simulated network in a lab. AISI is clear that its test range is easier than real-world environments. But the direction is hard to miss. Every new model gets meaningfully better at offense. The security industry may have a short window before these capabilities reach real infrastructure at scale.

GUIDES

Extract paper research insights with NotebookLM

Recaply: In this tutorial, you will learn how to upload the Stanford AI Index 2026 (or any large research report) to NotebookLM and turn it into summaries, Q&A sessions, and an audio overview without reading 400 pages.

Step-by-step:

Go to NotebookLM and create a new notebook titled "Stanford AI Index 2026," then click "Add source" and paste the PDF link: https://hai.stanford.edu/assets/files/ai_index_report_2026.pdf

Once uploaded, open the "Guide" panel and generate a study guide. NotebookLM breaks the report into key topics, chapter summaries, and discussion questions organized by theme.

In the chat panel, ask specific questions like: "What are the top 3 findings on job impact?" or "What does the report say about US vs China performance?" to pull exact answers from across all 400 pages.

Click "Audio Overview" and set a custom focus, for example: "Focus on public trust and policy findings." NotebookLM generates a 10-15 minute podcast-style summary you can listen to on the go.

Click "Mind map" to see how the report's themes connect, then export the map as an image or share the notebook with a teammate for collaborative research.

Pro tip: Upload the AI Index alongside a related article (like the TechCrunch piece on the expert-public trust gap) to let NotebookLM surface connections and contradictions between both sources in one Q&A session.

TOOLS

Trending AI Tools

📊 Kimi Code K2.6 Preview - Kimi AI's latest code model preview

🎥 HeyGen CLI - Agent-first command-line tool wrapping HeyGen's full v3 API

🎨 DaVinci Resolve Photo Editor - Blackmagic Design's Hollywood-grade color grading tools

⚙️ Kiro CLI 2.0 - AWS's AI coding CLI adds Windows support, headless mode for CI/CD pipelines, and a new Terminal UI

NEWS

What Matters in AI Right Now?

OpenAI acquired personal finance startup Hiro Finance in what looks like an acquihire, with roughly 10 employees joining OpenAI. Hiro's AI financial planning app shuts down April 20, with user data deleted by May 13.

Meta is building a photorealistic AI version of Mark Zuckerberg, trained on his mannerisms, tone, and public statements, with the aim of letting employees interact directly with the founder via the virtual character.

Apple's head of AI strategy, John Giannandrea, is leaving the company. The former Google AI lead joined Apple in 2018 and is expected to serve in an advisory role before retiring at year's end, as pressure builds on Apple to overhaul Siri.

LinkedIn is quietly testing an "AI labor marketplace" that pays contractors up to $150 per hour to train AI on coding, nursing, and finance tasks, competing directly with startups like Mercor and Scale AI.

Mercor revealed it's paying out over $1.5 million per day to human AI trainers, having hit $500M annualized revenue. Handshake, the student job platform, is on track for $100M in AI training revenue after entering the market in January.

Researchers are reporting that AI models are now proving genuinely new mathematical results. UCLA mathematician Terence Tao says 2025 was "the year when AI really started being useful for many different tasks," after models solved 5 of 6 problems at last summer's International Mathematical Olympiad.

🧡 Enjoyed this issue?

🤝 Recommend our newsletter or leave a feedback.

How'd you like today's newsletter?

Cheers, Jason