Good morning, AI enthusiasts. Here's a sentence you don't expect to read at 7am: your phone can now tell if your heart is quietly giving up on you, using nothing but five seconds of your voice.

The FDA just gave breakthrough device status to Noah Labs Vox, an AI that listens for the tiny acoustic changes caused by fluid in your lungs. So, is the next generation of cardiac care going to live in your pocket?

In today's recap:

Voice AI catches heart failure in 5 seconds

Netflix open-sources VOID, its physics-aware video eraser

New Yorker drops a damning Sam Altman profile

OpenAI floats a "New Deal" blueprint for superintelligence

4 new AI tools, prompts, and more

HEALTHCARE AI

Voice AI earns FDA breakthrough for heart failure

Recaply: Noah Labs just secured FDA breakthrough device designation for Vox, an AI that detects heart failure from a five-second voice clip, while also lining up EU approval for mid-2026.

Key details:

Users record a daily five-second voice sample, and the model scores vocal resonance for fluid buildup in the lungs, allowing doctors to catch decompensation before a hospital visit.

Vox was trained on over 3 million voice samples and validated across five clinical trials, with sites including Mayo Clinic and UCSF backing the "wetness score" output.

Heart failure hospitalizations cost the U.S. healthcare system over $30B per year, according to Pudgy Cat's writeup, and patients currently rely on self-reported symptoms as their main early warning.

Noah Labs expects EU approval by mid-2026, with the U.S. timeline now accelerated by the breakthrough tag, and no pricing or payer coverage announced yet.

Why it matters: There has been lots of talk of AI in healthcare being stuck at the demo stage, but Vox is a rare case of the hype lining up with real clinical data. Turning a daily "hello" into a cardiac screening tool could flip heart failure from a catastrophe you notice too late into something your phone warns you about days in advance, though payer adoption will decide whether it actually reaches patients.

PRESENTED BY MINTLIFY

AI Agents Are Reading Your Docs. Are You Ready?

Last month, 48% of visitors to documentation sites across Mintlify were AI agents, not humans.

Claude Code, Cursor, and other coding agents are becoming the actual customers reading your docs. And they read everything.

This changes what good documentation means. Humans skim and forgive gaps. Agents methodically check every endpoint, read every guide, and compare you against alternatives with zero fatigue.

Your docs aren't just helping users anymore. They're your product's first interview with the machines deciding whether to recommend you.

That means: clear schema markup so agents can parse your content, real benchmarks instead of marketing fluff, open endpoints agents can actually test, and honest comparisons that emphasize strengths without hype.

Mintlify powers documentation for over 20,000 companies, reaching 100M+ people every year. We just raised a $45M Series B led by @a16z and @SalesforceVC to build the knowledge layer for the agent era.

NETFLIX

Netflix open-sources VOID, a physics-aware video eraser

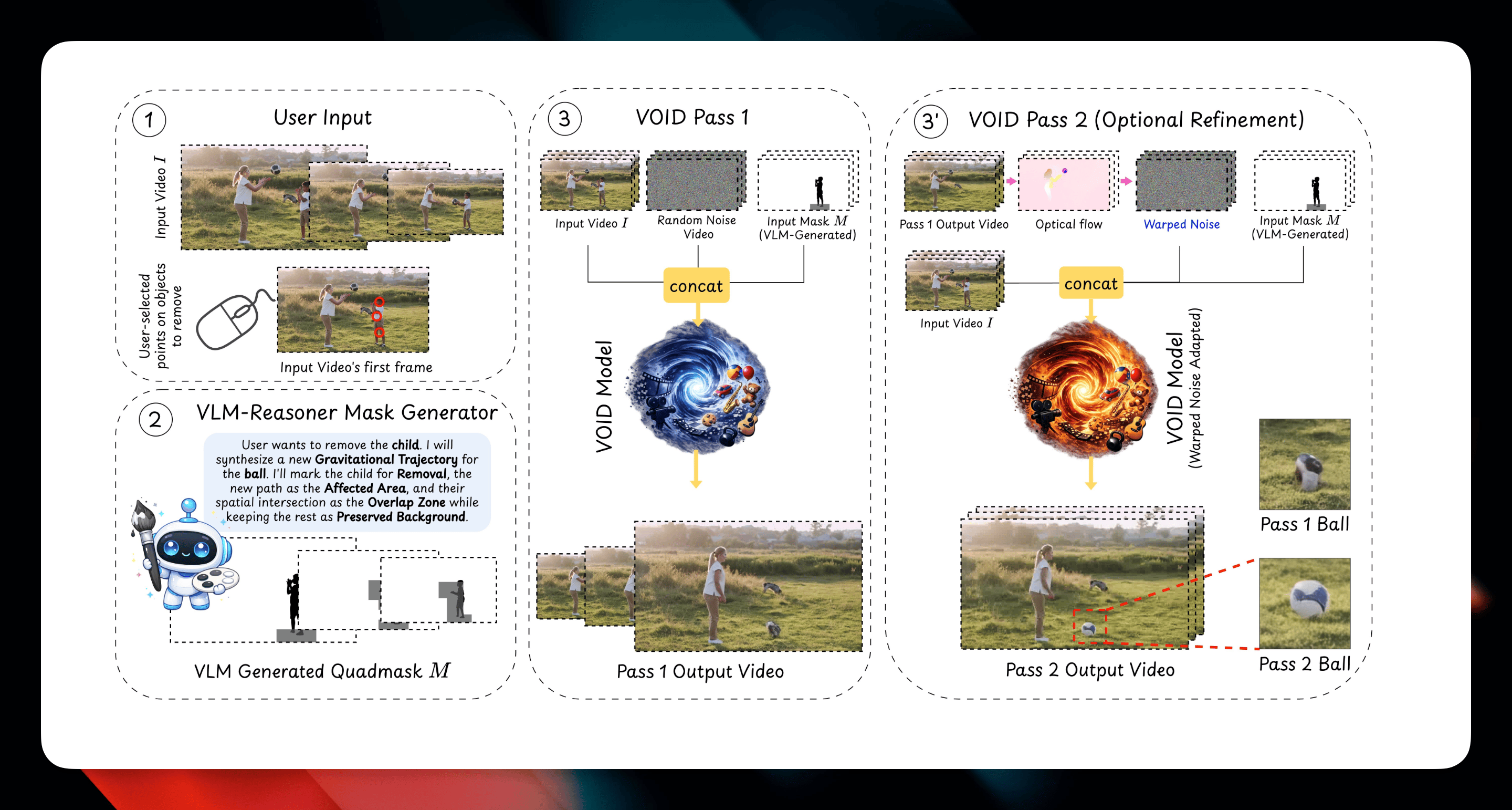

Recaply: Netflix just released VOID, an open-source video object removal framework that uses a vision language model to redraw the scene around whatever you erase, keeping collisions and shadows physically consistent.

Key details:

Users click an object to remove it, and VOID's VLM reasoning pipeline identifies which other parts of the scene will be affected, encoding them into a quadmask that guides the diffusion model.

The model runs a two-pass inference, with an optional second pass that uses flow-warped noise from the first pass to stabilize object shape when smaller video models start morphing geometry.

Training data comes from a new paired counterfactual dataset built on Kubric and HUMOTO, and the team says VOID outperforms ProPainter, Runway, MiniMax-Remover, and Gen-Omnimatte on both synthetic and real footage.

Code, weights, and a Hugging Face demo are live now under the Netflix GitHub org, with the paper available on arXiv as of today.

Why it matters: Most "erase this" tools quietly hope nobody notices the cat that was sitting on the falling book, and Netflix has just made that corner of the uncanny valley a lot smaller. Free, open-source, and backed by a research lab at a company that ships this kind of thing in production, VOID is the sort of release that makes paid cleanup tools sweat.

TUTORIAL

Erase unwanted objects from your videos with Netflix VOID

Recaply: In this tutorial, you will learn how to use Netflix's new open-source VOID model to cleanly remove people, props, or animals from your video footage, including the shadows, collisions, and second-order interactions most erasers break.

Step-by-step:

Go to the VOID Hugging Face demo at huggingface.co/spaces/sam-motamed/VOID, or clone the repo from github.com/Netflix/void-model if you want to run it locally on a GPU with the checkpoints from the release page.

Upload a short clip (5 to 10 seconds works best) and click the object you want to remove, letting the VLM identify which parts of the scene are causally linked to it, such as a ball the subject is holding or a shadow on the floor.

Review the auto-generated quadmask overlay to confirm VOID has picked up the right "affected regions," and adjust the click point if the mask misses a downstream interaction you care about.

Run the first pass to generate the counterfactual video, then inspect the output for object morphing artifacts, especially on thin or fast-moving subjects like hands, rackets, or ukuleles.

If you see shape drift, enable the optional second pass with flow-warped noise, which re-runs inference using the first pass as a stability guide, and export the final clip.

Pro tip: Start with scenes where the removed object has obvious physical consequences, like a bowling ball or a falling domino, because that's where VOID's physics reasoning pulls ahead of ProPainter and Runway, and it's also the most impressive thing to drop in a client review.

OPENAI

New Yorker drops a damning Sam Altman profile

Recaply: The New Yorker just published a long investigation into Sam Altman, drawing on seventy pages of internal memos that allege he misled executives and board members in the run-up to his 2023 ouster.

Key details:

Reporters reviewed secret disappearing messages from former chief scientist Ilya Sutskever, who in 2023 compiled Slack logs and HR documents for fellow board members with images shot on a phone to dodge corporate detection.

One of Sutskever's memos opens with a section headed "Sam exhibits a consistent pattern of..." where the very first item listed is "Lying," according to the piece.

The report lands alongside Ronan Farrow's own Altman investigation circulating on Reddit today, which alleges Gulf-state funding and surveillance operations against the reporter.

The profile is in the April 13, 2026 issue and is paywalled for non-subscribers, with a free lede available on newyorker.com.

Why it matters: OpenAI just published a sweeping "industrial policy" blueprint asking the public to trust it with the transition to superintelligence. The New Yorker piece is the other side of that coin, raising the uncomfortable question of whether the person steering that transition is the kind of operator you want holding the wheel. Expect this one to shape the Altman conversation for the rest of the week.

TOOLS

Trending AI Tools

🎬 Netflix VOID - Netflix's open-source video object removal model

🎤 Google AI Edge Eloquent - Google's offline-first iOS dictation app powered by on-device Gemma ASR

⚙️ Codex - OpenAI's cloud-based software engineering agent

🚀 Raycast AI - Raycast's productivity launcher with a built-in AI assistant

NEWS

What Matters in AI Right Now?

Anthropic crossed $30B in annualized revenue and signed a new deal with Google and Broadcom for multiple gigawatts of next-gen TPU capacity starting in 2027, with over 1,000 customers now spending $1M+ a year on Claude.

OpenAI outlined a 13-page "Industrial Policy for the Intelligence Age," floating public wealth funds, a 4-day workweek, and a $100K fellowship program to fund outside research on the ideas.

OpenClaw released version 2026.4.5 with built-in video and music generation tools wired to OpenAI, Google Lyria, MiniMax, Runway, and ComfyUI, plus an experimental "dreaming" mode for background memory consolidation.

Google launched Google AI Edge Eloquent, an offline-first dictation app on iOS powered by on-device Gemma ASR models, with filler-word cleanup and an optional cloud mode that uses Gemini for polishing.

Iran's IRGC threatened "complete and utter annihilation" of OpenAI's $30B Stargate AI data center in Abu Dhabi, releasing a video with satellite imagery of the 1GW site as part of the broader regional standoff.

🧡 Enjoyed this issue?

🤝 Recommend our newsletter or leave a feedback.

How'd you like today's newsletter?

Cheers, Jason