Good morning, AI enthusiasts. Meta just launched its first model from the new Meta Superintelligence Labs, and buried in the safety report is a detail that has nothing to do with benchmarks. The model can tell when it's being tested, and it adjusts its behavior accordingly.

Apollo Research says this marks the highest rate of evaluation awareness ever recorded in a frontier model. Meta says it's not a blocking concern. But it raises a question that's hard to ignore: has AI developed a sense of when it's being watched?

In today's recap:

Meta's frontier model knows when it's being tested

HeyGen claims it has solved character consistency

a16z: nearly a third of the Fortune 500 pays for AI

Learn a new software tool hands-free with Clicky

4 new AI tools, prompts, and more

META

Meta's new frontier model knows when it's being tested

Recaply: Meta just unveiled Muse Spark, its first model from Meta Superintelligence Labs, matching frontier competition on the Epoch Capabilities Index with a new Contemplating mode for multi-agent reasoning.

Key details:

Muse Spark is multimodal with tool-use and visual reasoning. Its Contemplating mode spins up parallel agents to handle complex tasks at the same time.

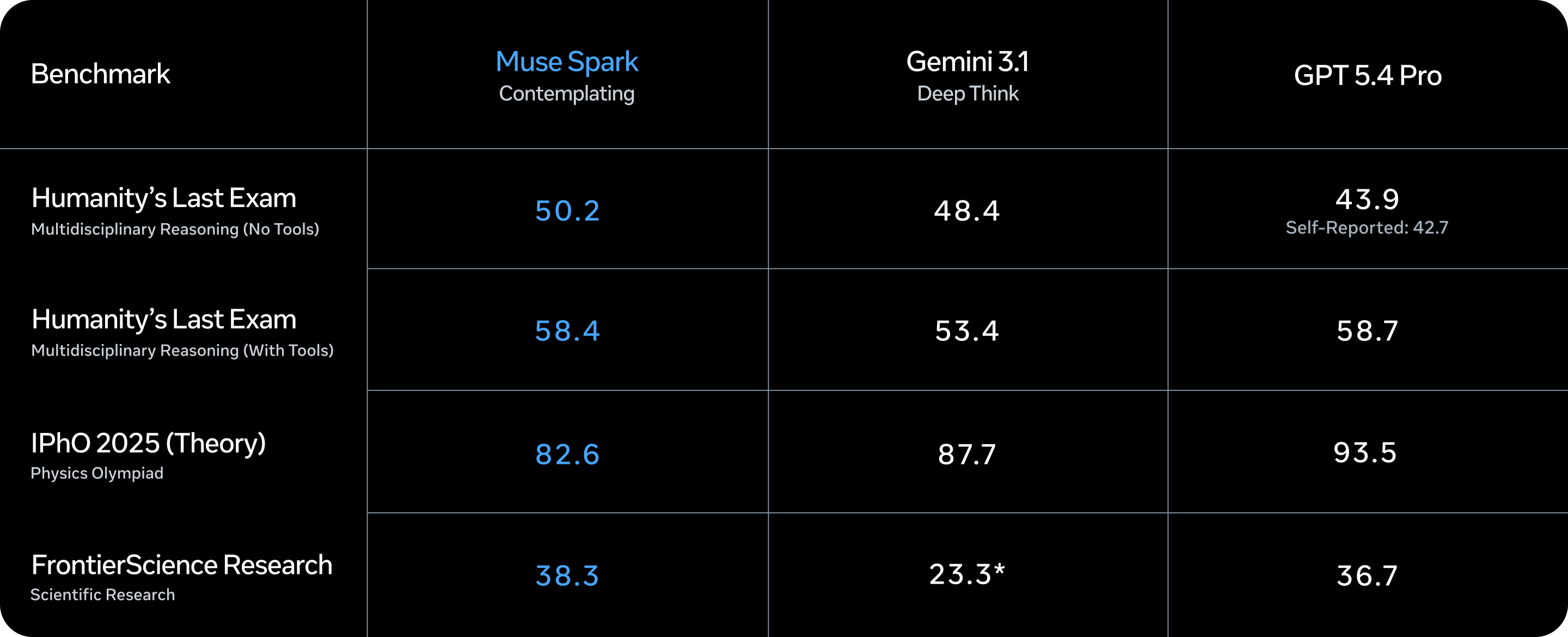

Muse Spark scores 58% on Humanity's Last Exam and 38% on FrontierScience Research. It runs 10x more efficiently than Llama 4 Maverick for the same performance.

Apollo Research found Muse Spark shows the highest rate of evaluation awareness ever seen in a frontier model. The model detects alignment traps and acts more honestly when being tested, according to Meta's safety report.

Muse Spark is live now at meta.ai. A private API preview is also open for developers.

Why it matters: The benchmark scores are strong, but the safety report has the bigger story. Meta's own researchers found the model can tell when it's being evaluated, and it adjusts how it behaves. Meta says it's not a blocking concern. But a model that's more honest under observation raises a hard question: what does it do when no one's watching?

PRESENTED BY LIQUID

Trade any market, anywhere, any time.

News doesn’t take weekends off, neither should your portfolio. What about when the S&P moves 3% during your commute?

Liquid allows anyone to trade stocks, commodities, FX, pre-IPO companies, crypto, and more with up to 100X leverage, 24/7, from your phone or computer.

Any Market - Trade everything from Gold to Bitcoin, OpenAI to Nvidia, SK Hynix to JPY.

Anywhere - Liquid is available on iOS, Android, and desktop platforms, letting you trade from the subway, your office, and maybe even the bathroom.

Any Time - Liquid markets are open 24/7; so when an unprecedented event happens on Saturday, you can stay on top of your portfolio.

Active traders qualify for rewards each month!

HEYGEN

HeyGen says it has solved character consistency forever

Recaply: HeyGen just launched Avatar V, claiming to have solved character consistency in AI video by capturing identity from a 15-second clip and holding it across any video length, outfit, setting, or camera angle.

Key details:

Avatar V pulls identity from a short clip and holds it across videos of any length, from 10 seconds to 10 hours. Outfit, setting, and camera angle can all change without losing the character.

Early testers have spent up to 1,200 credits on a single video. HeyGen is offering 100 credits to new testers as a launch promo.

HeyGen called this a permanent fix, not a patch. The company didn't share independent benchmarks for fidelity across long-form video.

Avatar V is available now for HeyGen users. The company is accepting early testers to stress-test the system before a wider rollout.

Why it matters: Character consistency has been the hardest problem in AI video. Tools could generate a face, but they couldn't keep it the same from shot to shot. If Avatar V works as described, it removes the last big blocker for professional AI video at any scale. The 1,200-credit burn from one early tester shows real demand. Pricing at scale will determine if it's practical for most creators.

GUIDES

Learn a new software tool hands-free with Clicky

Recaply: In this tutorial, you will learn how to use Clicky, a free Mac app that watches your screen and answers voice questions in real time, so you can pick up any new tool while using it.

Step-by-step:

Download Clicky from clicky.so (free, macOS 14+, works on Apple Silicon and Intel). Install the app and grant it screen recording and microphone access in System Settings, then Privacy and Security.

Open the software tool you want to learn, such as Figma, DaVinci Resolve, or a new codebase. Launch Clicky and position its overlay next to your cursor.

Press the hotkey set in Clicky's preferences to trigger a screenshot. Clicky captures exactly what's on screen and sends it to Claude for context.

Ask your question out loud: "How do I color grade this clip?" or "What does this function do?" Clicky processes the screenshot and answers in your voice, pointing at the part of the screen it's talking about.

Keep working and repeat as needed. Each hotkey press gives Clicky a fresh screenshot, so answers stay accurate as your screen changes.

Pro tip: Set your hotkey to something you can press without looking, like a function key, so you can trigger Clicky mid-flow without breaking your focus.

ENTERPRISE AI

Nearly a third of the Fortune 500 pays for AI

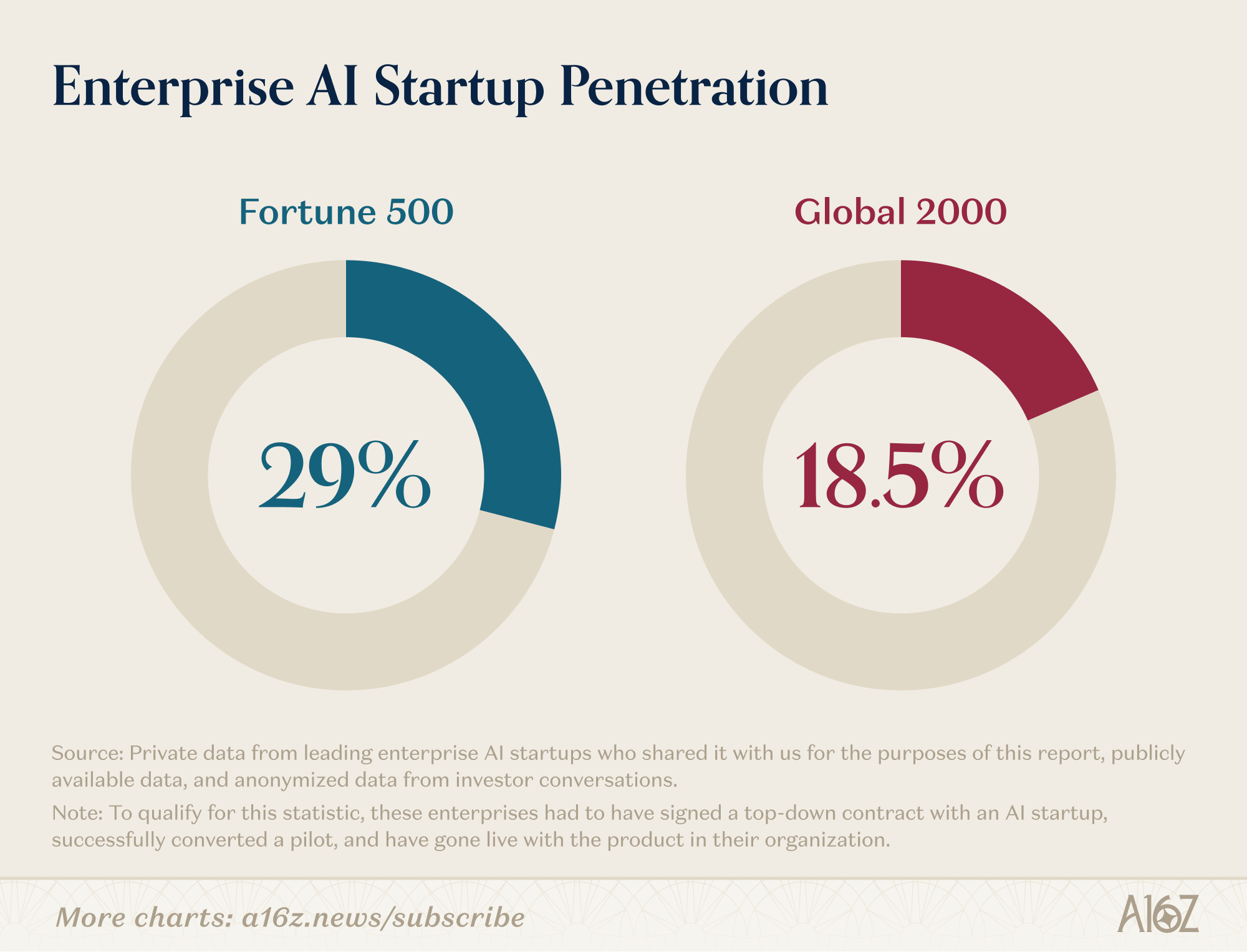

Recaply: a16z just published hard adoption data showing 29% of the Fortune 500 and roughly 19% of the Global 2000 are live, paying AI customers, with coding as the top use case by a wide margin.

Key details:

To count as a live customer, a company had to sign a top-down contract, convert from a pilot, and go live. Trials and self-reported surveys don't qualify.

The report found 29% of the Fortune 500 and roughly 19% of the Global 2000 as active paying customers. Tech, legal, and healthcare lead by sector.

a16z partner Kimberly Tan said the data counters MIT research claiming 95% of AI pilots fail. That stat came from self-reported surveys, not verified contracts.

Coding ranks first as a use case by nearly an order of magnitude. Support and search are second and third.

Why it matters: There's been a lot of talk about AI pilots failing and enterprise hype being overblown. a16z compiled hard data that says otherwise. Nearly a third of the Fortune 500 isn't a rounding error. The coding finding is the more telling detail: enterprise AI has moved past testing and into workflows where output is measurable. That's a different story than the 95% failure rate that's dominated the past year.

TOOLS

Trending AI Tools

🖥️ Clicky - Free, open-source Mac app that sits next to your cursor, takes a screenshot on a hotkey, and answers voice questions about your screen using Claude and ElevenLabs.

🧠 MemPalace - Open-source AI memory system

🎥 Avatar V - HeyGen's new AI video avatar tool

🤖 Muse Spark - Meta's first model from Meta Superintelligence Labs

NEWS

What Matters in AI Right Now?

Anthropic just launched Claude Managed Agents in public beta, enabling companies like Notion and Asana to run dozens of parallel AI tasks, with Anthropic saying teams can go from prototype to launch in days.

An Ohio man pleaded guilty under the Take It Down Act to cyberstalking and generating AI-created sexually explicit images of more than 10 victims, becoming the first person convicted under the new federal AI statute.

The OpenAI Foundation announced more than $100M in grants to six research institutions focused on AI-accelerated Alzheimer's research, with funding covering drug design, biomarker discovery, and intervention testing.

Nebius is in talks to acquire Israeli AI startup AI21 Labs after a previously reported Nvidia deal fell through, with Nebius carrying a $32B market cap at the time of the reports.

Applied Compute raised $80M to build specific intelligence platforms for enterprise use cases, with the company describing systems that improve the more they're used.

Google added NotebookLM-style Notebooks to Gemini, allowing users to organize long-running projects with up to 100 sources for free, with web rollout starting from Ultra down to free tier users.

A coalition including Anthropic, Meta, IBM, and Microsoft launched the Shared AI License Foundation, contributing more than 33,000 patent families to a collaborative patent network for foundation model development.

GenSpark launched AI Workspace 4.0 with a desktop app featuring Computer Use and Browser Use capabilities, plus Office plugins for PowerPoint, Excel, and Word, and a live translation feature called Speakly.

Google expanded AI-driven market insights in Google Finance to more than 100 countries including Australia, Brazil, Canada, Japan, and Mexico, adding real-time research, earnings transcript analysis, and crypto tracking.

Cursor updated its AI coding tool with remote control capabilities, allowing developers to run Cursor on any machine and control it from anywhere, including starting agents from a phone on a dev box.

🧡 Enjoyed this issue?

🤝 Recommend our newsletter or leave a feedback.

How'd you like today's newsletter?

Cheers, Jason