Good morning, AI enthusiasts. OpenAI's Sora lasted just six months before the liability stopped being worth it. Downloads fell 66%, revenue barely hit $2M, and a $1B Disney licensing deal collapsed before a dollar changed hands.

That's what happens when a deepfake social app meets a company trying to become the everything platform. Does OpenAI's tightening focus signal what the race actually looks like from the inside?

In today's recap:

OpenAI kills Sora, Disney pulls $1B licensing deal

Amazon enters humanoid robotics with Fauna acquisition

Automate feature work with Claude Code's new Auto Mode

OpenAI completes Spud pretraining, new model imminent

4 new AI tools, prompts, and more

OPENAI & DISNEY

OpenAI kills Sora as Disney exits $1B deal

Recaply: OpenAI just shut down its standalone Sora app, folding video generation into an upcoming super app, while Disney pulled its $1B licensing deal and character rights at the same time.

Key details:

Sora's video generation will move inside OpenAI's upcoming super app, combining it with other AI tools rather than running as a standalone product, according to a statement from the Sora team.

Downloads fell 66% since launch, with total revenue barely reaching $2M. Disney's pulled licensing deal was valued at $1B and would have given OpenAI rights to generate content with Disney characters.

According to reports, Disney's exit wasn't purely financial. The studio had raised concerns about deepfake risks and the use of its characters in AI-generated content without adequate controls.

Sora launched as a standalone app six months ago. The app shuts down immediately, with video features set to move to OpenAI's broader platform in Q2 2026.

Why it matters: Sora was OpenAI's biggest creative bet outside of text, and it lasted six months. Disney's exit signals that even major studios won't hand over IP rights without stronger safeguards. With video generation folded into a super app, OpenAI is betting bundling matters more than standalone products. Losing a $1B deal before it started shows the creative market isn't ready to trust AI with its most valuable assets.

PRESENTED BY HUBSPOT

The Future of AI in Marketing. Your Shortcut to Smarter, Faster Marketing.

Unlock a focused set of AI strategies built to streamline your work and maximize impact. This guide delivers the practical tactics and tools marketers need to start seeing results right away:

7 high-impact AI strategies to accelerate your marketing performance

Practical use cases for content creation, lead gen, and personalization

Expert insights into how top marketers are using AI today

A framework to evaluate and implement AI tools efficiently

Stay ahead of the curve with these top strategies AI helped develop for marketers, built for real-world results.

OPENAI

OpenAI completes Spud pre-training, new model imminent

Recaply: OpenAI just completed pre-training on a new model internally called "Spud," with CEO Sam Altman saying the pace of development is moving faster than most people expected.

Key details:

Pre-training is the first major stage of model development, where the model learns from massive datasets. Completing it means Spud will next go through fine-tuning and safety testing before any public release.

The Reddit post reporting this gained 432 upvotes in r/singularity, reflecting how closely the community tracks OpenAI's release pace. Altman's comments suggest a shorter timeline than previous model cycles.

TheInformation first reported the pre-training completion. Altman's public signal about faster-than-expected development is notable given the competitive pressure from Google's Gemini 3.1 and Anthropic's recent releases.

No official release date has been confirmed. Based on typical post pre-training timelines, Spud could enter public testing as early as Q2 2026.

Why it matters: OpenAI has been on defense since Google's Gemini 3.1 topped SWE-bench this week. Finishing Spud pre-training signals the team won't stay there for long. Altman's public comment about faster-than-expected development is rare, and the timing looks deliberate. It's still unclear whether Spud arrives as a focused update or a full flagship challenger, but the race for the top benchmark spot just got more interesting.

TUTORIAL

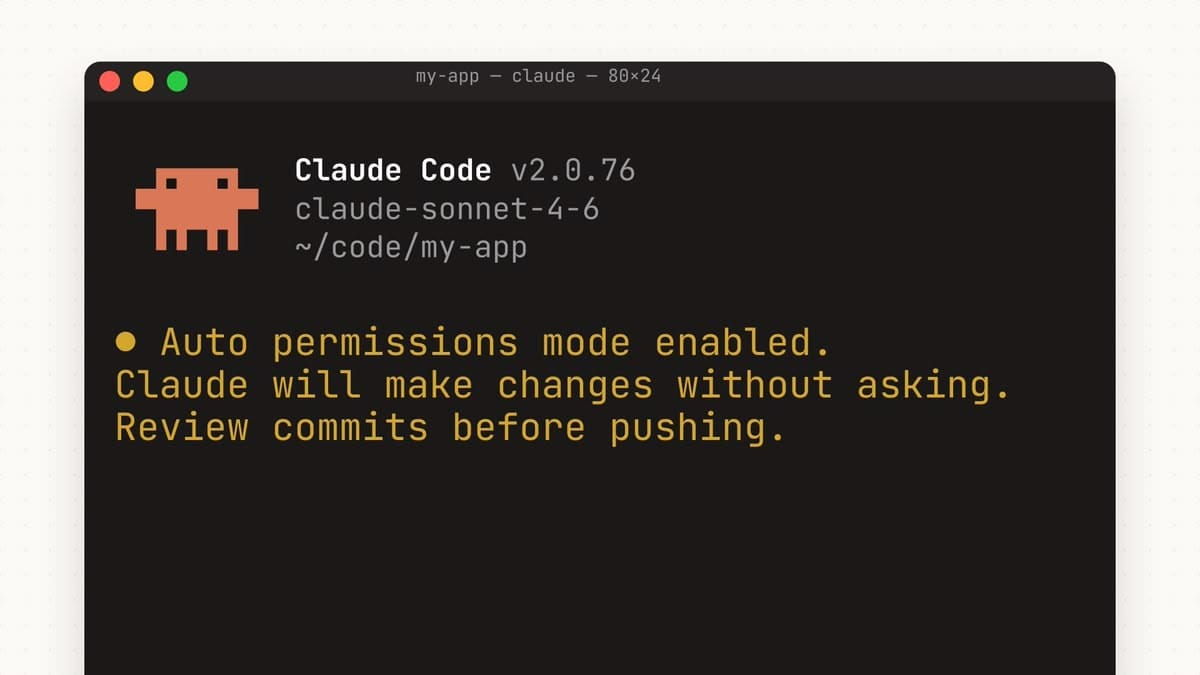

Automate feature work with Claude Code's new Auto Mode

Recaply: In this tutorial, you will learn how to hand off a full coding feature to Claude Code using Auto Mode, letting it read files, write code, and run tests end-to-end without step-by-step supervision.

Step-by-step:

Make sure you're on the Claude Team plan, then open your project directory in the terminal and run

npm install -g @anthropic-ai/claude-codeto install the CLI. Open the project you want to work on and runclaudeto start a session.Type

/autoin the Claude Code session to activate Auto Mode. Claude will confirm the mode switch and display which permissions are active. For best results, run this on a dedicated feature branch rather than directly on main.Describe the feature you want built in plain language. Be specific about the tech stack and constraints, for example: "Add email and password authentication using JWT tokens. Use our existing Express setup and don't change the database schema." The more context you give, the cleaner the output.

Let Claude run. In Auto Mode, it reads files, writes code, runs your test suite, and logs every action it takes. Watch the permission log to see what it's doing. If something looks off, type a correction in real time and it will adjust without restarting.

Review the output and check the generated pull request or staged changes. If the result needs refinement, reply with specific feedback in the same session, such as "the login route is missing rate limiting" and Claude will revise without starting over.

Pro tip: Start with a well-scoped, isolated feature. Auto Mode works best when the task has clear boundaries. Avoid vague goals like "improve the codebase" on the first run. Once you're comfortable with how it behaves, you can expand the scope.

AMAZON

Amazon buys Fauna Robotics, enters humanoid race

Recaply: Amazon just acquired Fauna Robotics, a humanoid robot maker described as "approachable," making its first direct bet on embodied AI as competitors race to add physical robots to their platforms.

Key details:

Fauna Robotics builds humanoid robots designed for warehouse and logistics environments. Amazon plans to deploy them across its fulfillment network, with the "approachable" design built for side-by-side work with human employees rather than full automation.

Amazon operates over 1,000 fulfillment centers globally. Google announced a new partnership with Agile Robots the same day, marking the first time both companies have made major embodied AI moves in a single news cycle.

Fauna Robotics operated in stealth for two years before the acquisition. Founders described the robot as built for human-robot collaboration, not replacement, which set it apart from other humanoid players in the market.

Fauna is expected to become an Amazon Robotics subsidiary. Amazon has not confirmed a deployment timeline, but fulfillment center integration is expected to begin in late 2026.

Why it matters: Amazon's acquisition of Fauna Robotics isn't just about warehouse efficiency. It's a signal that the robotics race is no longer a side bet for tech platforms. Google's Agile Robots partnership dropped the same day, making it the first time two of the biggest companies in tech both moved on embodied AI in a single news cycle. The question now is whether Microsoft, Meta, or Apple are next.

TOOLS

Trending AI Tools

🔧 Lovable Pentesting - Lovable's first penetration testing feature for AI-generated apps

🎨 Figma MCP - Figma's new MCP server that lets AI agents read and manipulate design canvases directly

⚙️ TurboQuant - Google Research's extreme model compression tool

🎬 Grok Imagine - xAI's new multi-image to video and video extension tool

NEWS

What Matters in AI Right Now?

Anthropic released Auto Mode for Claude Code on its Team plan, letting agents run coding tasks fully autonomously without step-by-step approval. The feature logs every action the agent takes and keeps human guardrails in place via a permission system.

Security researchers revealed that a popular Python package with 97 million monthly downloads had been poisoned in a supply chain attack, stealing SSH keys, AWS credentials, and crypto wallets from infected machines. Andrej Karpathy flagged the breach, calling it one of the most damaging package-level attacks this year.

Apple is planning a major Siri reboot for iOS 27, including a dedicated app, a new look, and an "Ask Siri" button accessible from any screen. The redesign follows years of criticism that Siri has fallen behind competitors like ChatGPT and Google Gemini.

Baltimore became the first US city to sue xAI over Grok-generated deepfake pornography, targeting the company under state law for failing to prevent the creation of non-consensual imagery. The lawsuit could set a precedent for how cities hold AI companies accountable for harmful outputs.

Arm released its first in-house chip in the company's 35-year history, called the AGI CPU, designed to run AI workloads at the edge without relying on cloud infrastructure. The move signals Arm's shift from a pure IP licensing model to becoming a full hardware competitor.

Google's Gemini 3.1 topped the SWE-bench leaderboard for software engineering tasks, beating OpenAI and Anthropic models in automated code repair and bug-fixing benchmarks. The result adds pressure on OpenAI, which is currently in post-pretraining on its next flagship model.

An Oregon attorney was fined a record $16,500 for citing AI-hallucinated case law in court filings, the largest penalty handed down in a US legal case involving AI-generated citations. The ruling signals courts are escalating consequences as lawyers continue to submit fabricated references.

Bill Gates said only three jobs will survive AI's rise, including therapists, educators, and skilled tradespeople, arguing that AI won't be able to replicate deep human connection or hands-on physical work. The comments add to a growing list of executive predictions about which roles AI will eliminate first.

🧡 Enjoyed this issue?

🤝 Recommend our newsletter or leave a feedback.

How'd you like today's newsletter?

Cheers, Jason